Validation Mindset: Why Verification Is Non‑Negotiable

In legal practice, “plausible” is not good enough. AI output must be verified against authoritative sources before use in advice, filings, or client communication.

Key takeaways

- Verification is mandatory for anything beyond low-stakes drafting.

- Treat AI output like a junior draft: helpful, not authoritative.

- Document your validation steps for defensibility.

Your baseline rule

Never rely on an AI-generated citation, quote, or factual claim without checking it. Verification is faster than fixing sanctions.

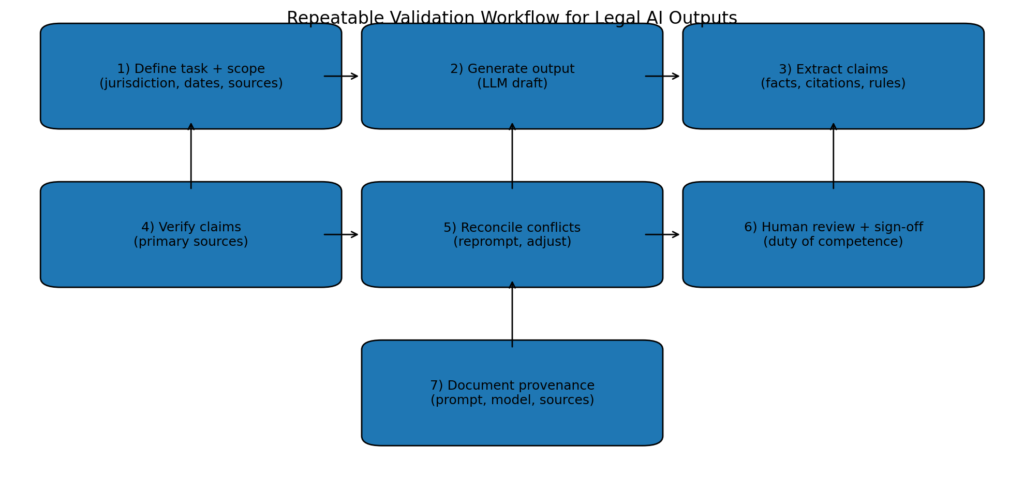

A defensible workflow

- Generate a draft with the model.

- Extract every factual claim/citation into a checklist.

- Verify using primary sources (cases/statutes) and trusted secondary sources.

- Revise the draft with corrected citations and tightened reasoning.

- Keep a record of what you checked (for defensibility).

When to stop using the model

If the model repeatedly invents citations, refuses to follow constraints, or cannot stay within a jurisdiction, stop and switch to traditional research tools or a retrieval system that uses a vetted database.

Reference example

Example of why verification matters: Mata v. Avianca (S.D.N.Y. June 22, 2023).