Prompt Debugging: Lost Middle, Ambiguity, and Token Hygiene

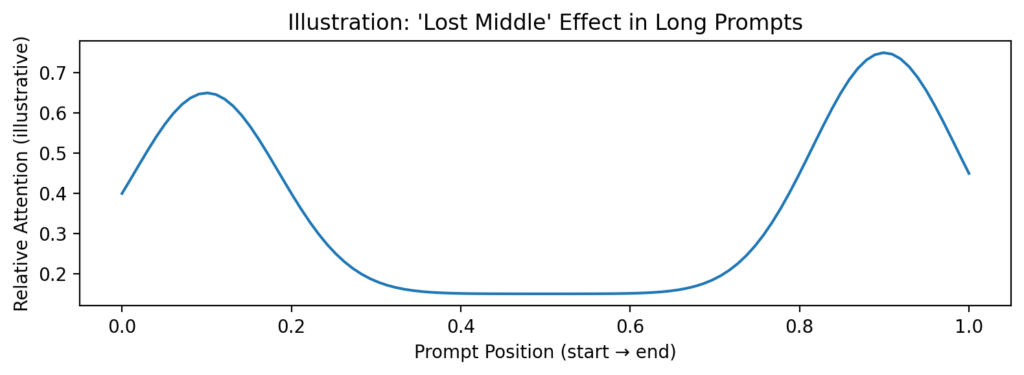

When prompts get long, models can overlook details—especially if critical constraints sit in the middle of the prompt. Put key rules up front and repeat them at the end.

Common failure modes

- Ambiguity: the model guesses what you meant.

- Lost middle: important constraints get ignored.

- Overload: too much irrelevant context dilutes the signal.

Debugging checklist

- Move the key question to the top.

- Reduce context to only what is necessary.

- Add explicit constraints (“Do not invent citations”).

- Ask for a short answer first, then expand.

A practical pattern: draft → critique → revise

Step 1: Draft. Step 2: Critique the draft for factual risk, missing issues, and clarity. Step 3: Revise using the critique.